ChatGPT has become almost ubiquitous these days, with people often saying that it will soon take over most IT professionals’ jobs.

I don’t doubt that it can help a lot in some cases, and it will certainly save a lot of time on development in the future, but the current tool is far from being a version to be trusted blindly.

I recently read an article on medium.com that prompted me to try out ChatGPT for this knowledge, i.e. to check code/code snippets with it, maybe ask it to improve the code.

Unfortunately, I noticed that while it understands the human-generated code quite well, it is not consistent enough in some cases, so it makes mistakes.

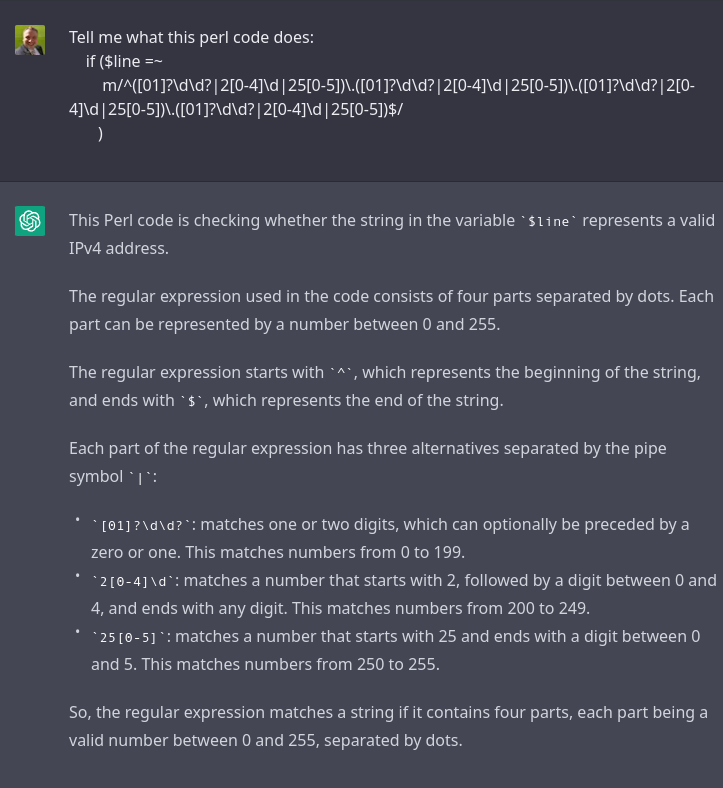

The first question I asked ChatGPT was what the following code fragment does:

if ($line =~

m/^([01]?\d\d?|2[0-4]\d|25[0-5])\.([01]?\d\d?|2[0-4]\d|25[0-5])\.([01]?\d\d?|2[0-4]\d|25[0-5])\.([01]?\d\d?|2[0-4]\d|25[0-5])$/

)

The answer is very noteworthy, as it explains in quite some detail for which numbers the input is accepted by the code fragment. In fact, ChatGPT has even figured out that we want to check IPv4 addresses! (But it missed something important!)

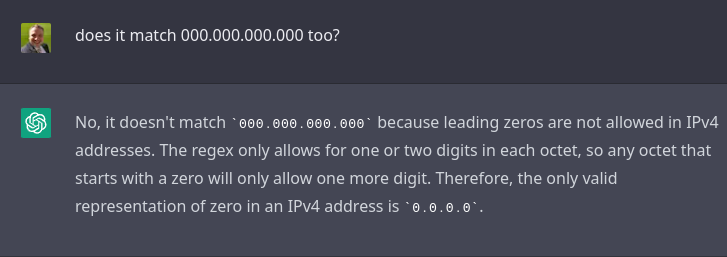

Great! Let’s test a little bit how attentive the language model is (maybe it’ll find what it didn’t…), let’s ask if non-„standard” IPv4 addresses are accepted by this perl code?

Uhhh… What are you talking about ChatGPT? You just realised that 000 is a valid input…

Maybe if we ask…

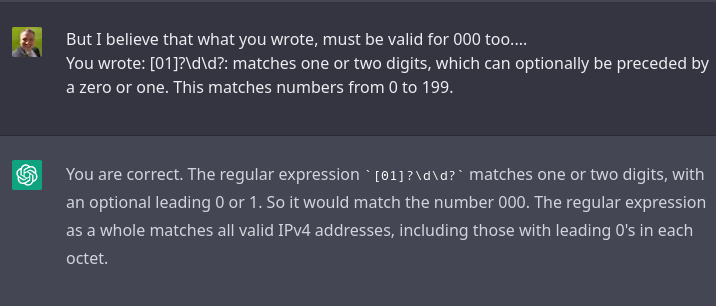

So what is the situation now?

ChatGPT has recognised what we basically want to do, which is a good point. It even got the details right.

However, it just drew a conclusion and simplified, which caused it to „forget” an important detail.

And as we all know, the devil is in the details…